Evolution of digital twins for floating production systems

Over the past five years, the push toward digital twins has continued to move forward. The application of oilfield digital twins has varied between functioning as an information repository or as an analytical engine. The next generation of digital twins will focus on combining these functionalities with additional capabilities, to become comprehensive design and integrity management (IM) tools.

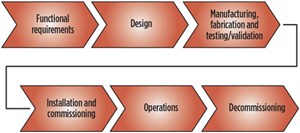

Evolution of IM and maintenance strategies. The recent release of the API suite of integrity management recommended practices for floating systems, risers and mooring (2FSIM, 2RIM & 2MIM) in September 2019 provides further industry guidance for appropriate integrity management and maintenance strategies. One of the primary recommendations of these recommended practices is that integrity management should cover the entire life cycle of the asset, starting with the development of functional requirements, Fig. 1.

Historically, an integrity management program is developed and implemented during the operations phase of the life cycle. This approach often can lead to significantly higher operating expenditures (OPEX) and capital expenditures (CAPEX). A simple example is the design of a structural connection that is difficult or impossible to inspect. While this may simplify the design or fabrication of the connection, the lack of inspectability may lead to the necessary replacement or reinforcement of the connection during the operations phase, especially if life extension activities are pursued. The cost of changing the design of the connection is typically much less than the cost to perform repairs offshore.

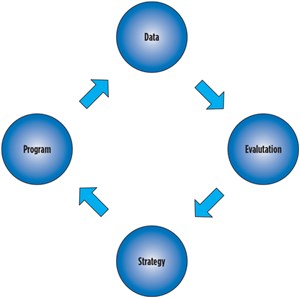

The generic IM process described by RP 2FSIM is shown in Fig. 2. Data describing the condition of the riser system is gathered and then evaluated to identify anomalies. A strategy is developed to address any concerns identified and then a program is developed to implement any necessary mitigations. The cycle is continuous for the life of the floating system. The data describing the condition of the floating system can be:

- Documentation and drawings from the design, fabrication, installation or operations phase

- Inspection data and findings

- Instrumentation data from sensors

- Analysis data from design or assessments.

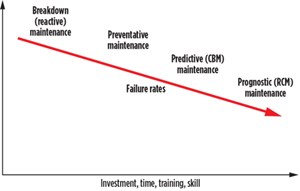

The strategy for integrity management and maintenance will vary on the complexity and criticality of a particular component. The Department of Defense’s Condition Based Maintenance Guidebook (CBM) presents four distinct maintenance strategies, recreated in Fig. 3. The strategy with the highest failure rate is breakdown maintenance (fix it when it breaks); the strategy with the lowest failure rate is reliability-centered maintenance (RCM). RCM is also referred to as prognostic maintenance. Implementing maintenance strategies to reduce failure rates incurs additional investments in time, training and skill. The implementation of increasingly complex maintenance strategies should be considered on a cost-benefit basis, to determine which strategy is most appropriate. The cost-benefit analysis should include consideration of the cost of failure of the component. Million-dollar failures warrant more complex maintenance strategies than failures having negligible impact.

Advances in digital twins. The functionality of oilfield digital twins has typically focused on acting as either an information repository or as an analytical engine. When used solely for one of these purposes, the digital twin benefits are limited. A fully developed digital twin should combine both functionalities. This combined functionality also allows for additional capabilities to be developed, providing benefits for the life cycle of the asset.

One example is comparing design predictions to responses measured during the operation of the asset. Design predictions are performed using analytical models built with many assumptions. Comparing predictions to actual responses allows for the evaluation of the conservatism (or unconservatism) created by these assumptions, or provides a basis to refine the assumptions to provide more realistic predictions. The refinement of these model assumptions applies to not only the asset in operation, but also to any new asset being designed.

A specific example of this is the predicted offset of a floating facility, Fig. 4. The primary driver for offset is the drag created by the wind and current environmental loads. Typical design assumptions use drag coefficients determined by scale-model tests or industry best practices. The offset of a floating facility is typically monitored by GPS, while wind and current speeds are determined from metocean sensors. The automated capabilities of a digital twin allow for efficient parametric studies to determine accurate drag coefficients to best match the measured response. These refined drag coefficients can be used for future assessment of both the asset in operation and any new asset currently in design.

The fully developed digital twin is also capable of containing or providing all the data required for the integrity management process. A complete digital twin facilitates the integrity management process by providing:

- A full suite of data for the history of the facility

- Documents spanning the life cycle of the asset:

- Reports

- Drawings

- Manuals

- Measured data

- Inspection data

- Analysis data and analytical models

- Documents spanning the life cycle of the asset:

- Capabilities to evaluate and assess the system:

- Historical conditions

- Present conditions

- Future conditions

Physics-based models vs data science applications. Typical discussions of digitalization consider machine learning or artificial intelligence. Fortunately, the capabilities of a fully developed digital twin allow for both techniques to be applied, either separately or in combination. In general, physics-based models are better applied in situations where complete or accurate data are unavailable. The previous example of evaluating measured floating facility offset recorded by GPS is very powerful in regard to static offsets, but the typical dynamic offset that contributes most to fatigue damage is too small to be measured accurately by many offshore GPS devices.

Applying a solution, based solely on machine learning techniques using GPS data, would not provide accurate predictions. Conversely, machine learning techniques excel at identifying trends that are not well understood and would be beyond the capabilities of a physics-based model. These solutions are especially powerful, when data are available from a significant number of similar or identical components.

Many offshore components are designed to not allow for failure. Risers and tendons use fatigue safety factors of 10 or greater. Performing failure prediction using big data solutions for these components is difficult, if not impossible, due to the lack of failure data. For these cases, a physics-based model is required to provide failure predictions. Similarly, many risers, tendons and mooring lines are unique designs, where data collected on components on one facility may not have direct applicability to similar components on another facility. Other components, such as pumps, have hundreds or thousands of installations that provide ideal settings for data science applications. Applying the right tool for each situation provides the appropriate insight required by the asset owner.

For many applications, both physics-based models and data science applications can be applied in a cooperative manner. For applications where insufficient or low-quality data are available for data science applications, a physics-based model can be used to generate or refine the required data. For applications better suited to data science techniques, a physics-based model could be used to determine which data are most appropriate for the intended solution.

Advances in computing and information technology. Advances in computing and information technology have greatly increased the ability to implement digitalization solutions. Edge computing products can eliminate bandwidth issues by performing on-site computations and providing low-bandwidth output data. Cloud computing technology is typically thought of in terms of providing seemingly infinite processing power and low-cost data storage; however, there are significantly more benefits to cloud technology, including scalability and ease of collaboration. Advances in scalable cloud compute resources, as well as connectivity, have significantly reduced the barrier of entry for organizations to take advantage of these resources in everyday workflows.

While the continual improvements in processing power and reduced cost described by Moore’s law are a significant part of the digital revolution, the advancements made in the ability to store, transmit and access data are possibly even more important. A plethora of databases have been developed for different applications, including traditional relational (SQL) databases and non-relational (NoSQL) databases. Each database has different benefits and limitations. The selection and application of a database (or databases) for a given solution requires expertise in the database systems and an understanding of the application.

These resources, when coupled with traditional physics-based models and data science techniques, have the opportunity to yield an unprecedented amount of insight regarding system performance in a much more efficient manner than traditional approaches.

Importance of domain expertise. The trend toward the digital oilfield has led to the availability of a myriad of new tools and techniques, which are increasingly easy to implement. The application of these tools and techniques must be done with the guidance of subject matter experts and technical authorities to reach their full potential benefits. A particular application may require domain expertise from multiple disciplines, such as engineering, information technology, commercial/business, human resources, management or finance. Experts from these different fields must work together to develop the required solutions.

Even for seemingly generic applications of machine learning algorithms, a deep understanding of the asset in question will allow for the correct type of data to be applied in the data training process. While machine learning algorithms excel at identifying trends, they are only able to do so when properly trained. Similarly, physics-based models must be used correctly. Identifying the correct model is an important first step, but all physics-based models rely on inputs and assumptions that also must be chosen carefully. Domain expertise also extends into how insight provided by digitalization tools can be leveraged to improve integrity management, maintenance and design. Regardless of the technological advancement that a particular tool may embody, the tool will essentially be useless, if it cannot provide useful insight.

Overcoming the hype cycle. Gartner’s hype cycle captures a subjective path of the development of new technology. The hype cycle has five stages:

- Technology trigger

- Peak of inflated expectations

- Trough of disillusionment

- Slope of enlightenment

- Plateau of productivity.

While each new technology may not follow this exact path, the hype cycle is a visual representation of the challenges new technology faces. In the general scientific/engineering community, many new discoveries begin in academia with little regard for practical application. Over time, the usefulness of these discoveries is evaluated. Some discoveries may never find a practical application. Other discoveries may be used in a manner completely unintended during the product development. Classic examples of this include the development of a rubber substitute that became Silly Putty and the development of a “failed” super-strong adhesive that led to Post-It Notes. While these examples may seem trivial, the same process applies to many of the new digital oilfield tools being developed in the industry.

As a whole, the oil and gas industry has collected petabytes of data. Much of this data is unused and unrefined. Learning how best to use these data is a process that will have successes and failures. A reported 25% to 85% of artificial intelligence initiatives do not deliver on the stated goals. This does not mean that AI is not a beneficial technology, but it does mean that our understanding of the useful application of AI technology is not fully developed. These failures may contribute to temporary disillusionment; however, many companies have already reported great successes, such as using machine learning techniques to help identify oil and gas formations from seismic data, and using digital twins to enhance integrity management programs. Learning from the failures and building upon the successes will allow the industry to fully benefit from the capabilities of the digital oil field.

CONCLUSIONS

The evolution of digital twins for floating production systems has created integrity management tools that greatly improve an operator’s ability to maintain and operate its facilities. By embodying the processes and methodologies that have been codified in the API IM suite of recommended practices, the digital twin provides most of, if not all of, the information required for a complete integrity management program. By leveraging both physics-based analytical models and data science techniques, such as machine learning, the benefits of the digital twin are further expanded by facilitating predictive and prognostic maintenance programs.

The implementation cost for digitalization tools is increasingly reduced by advances in computing and information technology, allowing for greater analytical capabilities as well as data storage and transfer. The development of these tools, with the guidance of subject matter experts, enhances their value by focusing on the most needed applications. This also avoids the negative effects of the hype-cycle that occurs when investment is made in tools that prove to be impractical.

- Unlocking ultra-high-pressure reserves: Why Shenandoah is a milestone for deepwater engineering and advanced chemistry (March)

- Regional Report: Brazil reaches for new heights in 2026 (March)

- First Oil: Our Deepwater Development Conference rides the sector’s momentum (March)

- What's new in production: Things go better with Coke (February)

- Before OPEC, there was Texas: A better path for Venezuela’s oil revival (February)

- International E&P shows the way forward (February)

- Subsea technology- Corrosion monitoring: From failure to success (February 2024)

- Applying ultra-deep LWD resistivity technology successfully in a SAGD operation (May 2019)

- Adoption of wireless intelligent completions advances (May 2019)

- Majors double down as takeaway crunch eases (April 2019)

- What’s new in well logging and formation evaluation (April 2019)

- Qualification of a 20,000-psi subsea BOP: A collaborative approach (February 2019)